Social Foundations of Computation

Members

Publications

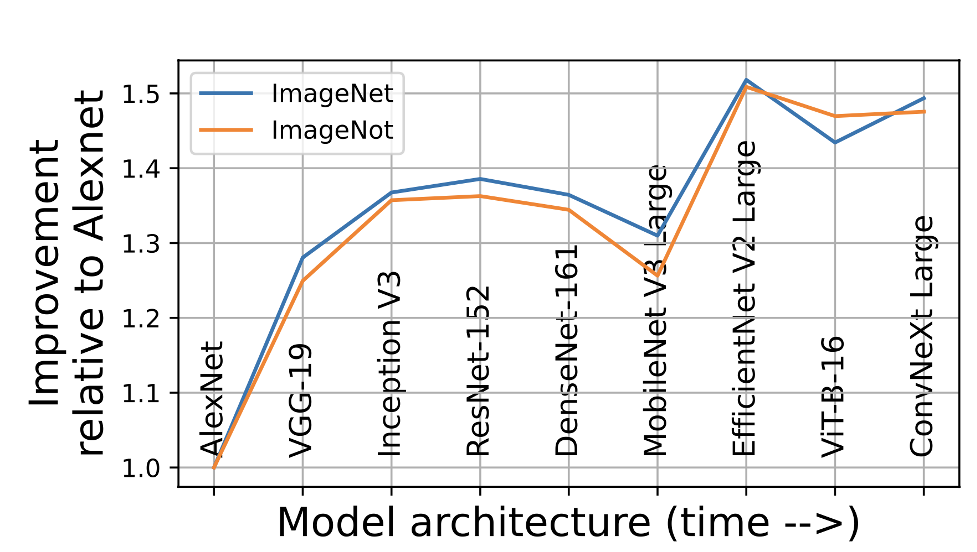

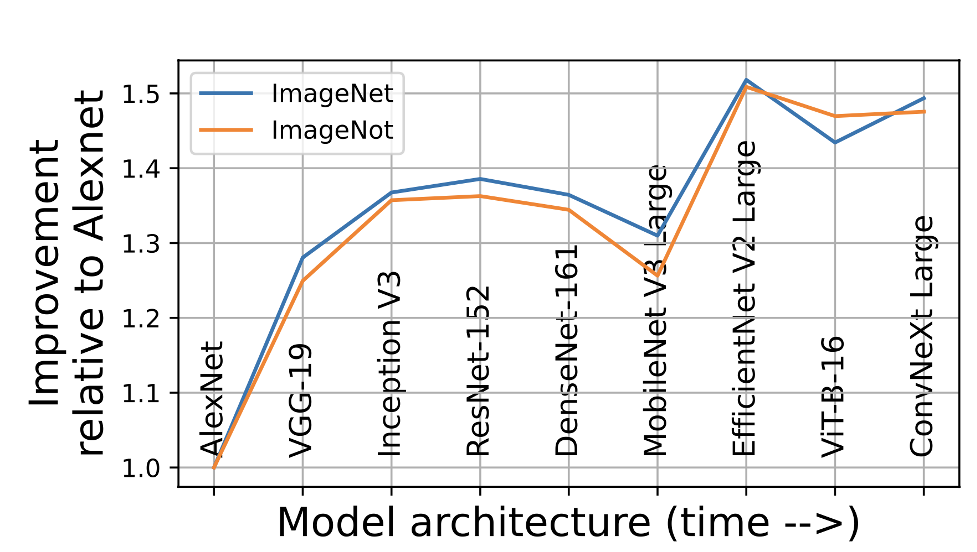

Comparison shows same relative model improvement on ImageNet and ImageNot. In particular, model rankings are the same.

ImageNot: A Contrast with ImageNet Preserves Model Rankings

ImageNot is a dataset created to test the external validity on model rankings from the ImageNet era. Surprisingly, models show the same relative improvements on ImageNot as they did on ImageNet, even though the datasets are strikingly different.

Members

Publications

Social Foundations of Computation

Conference Paper

ImageNot: A Contrast with ImageNet Preserves Model Rankings

Salaudeen, O., Hardt, M.

April 2024 (Submitted)

ArXiv

BibTeX