Videos

Simultaneous Object and Manipulator Tracking

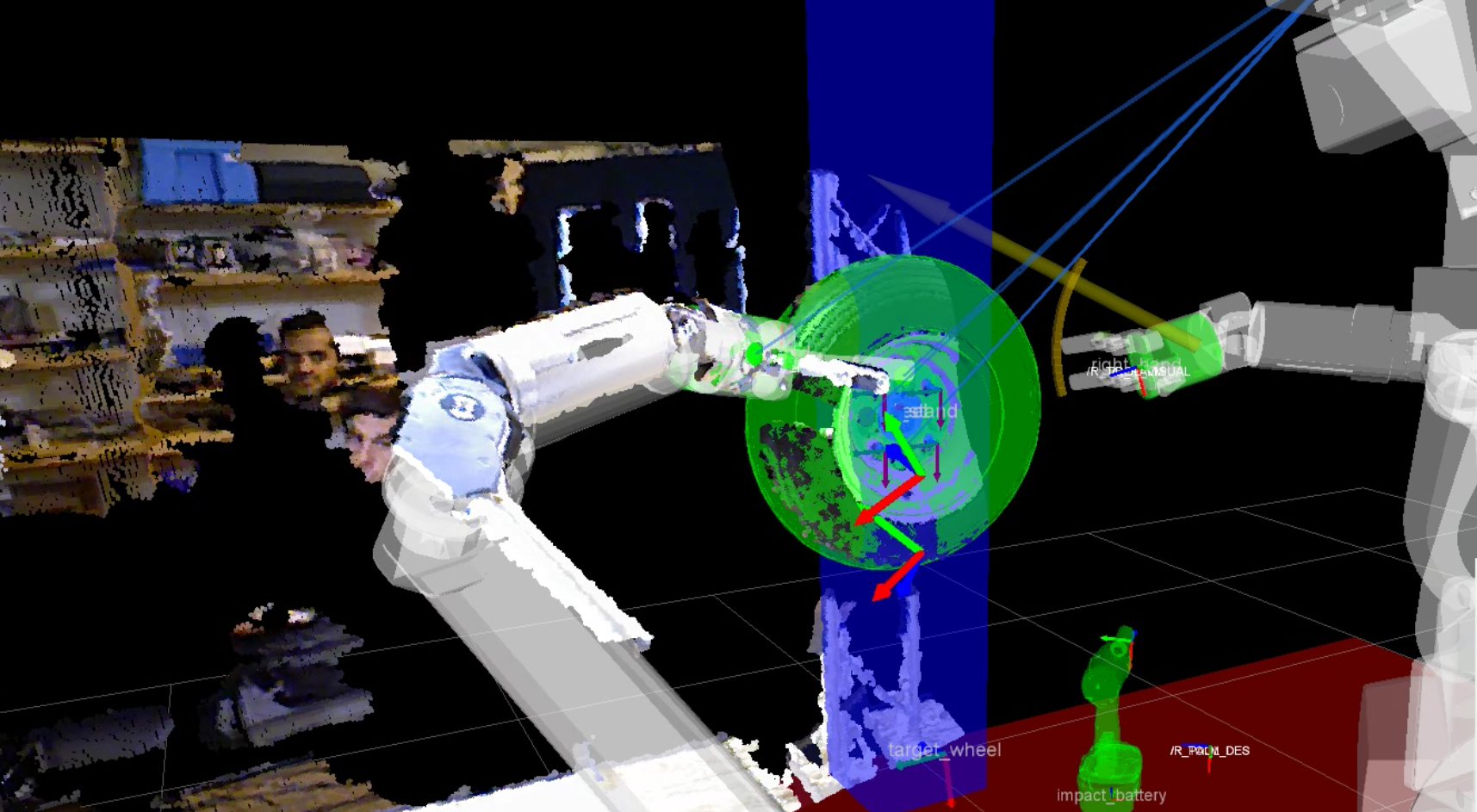

We show our real-time perception methods integrated with reactive motion generation [] on a real robotic platform performing manipulation tasks.

Robust Probabilistic Robot Arm Tracking

We propose probabilistic articulated real-time tracking for robot manipulation [].

This video visualizes the performance given different sensory input to estimate the pose and joint configuration of a robot arm. Perfect performance is achieved if the colored overlay matches the arm in the image.

Robust Probabilistic Object Tracking

We developed a set of methods that is robust to strong and long terms occlusions and noisy, high-dimensional measurements. The following video visualizes our object tracking method for robust visual tracking under strong occlusions that is based on a particle filter [].

Code & Data

Open Source Code

We released our methods as open source code on github in the Bayesian object tracking project.

We provide an easy entry point on our getting-started page.

Data Sets

We also provide data sets that allow quantitative evaluation of alternative methods. They contain real depth images from RGB-D cameras and high-quality ground truth annotations collected with a VICON motion capture system.

Robot Arm Tracking

The below pictures shows three samples of the data set that were recorded on our robot Apollo. Sequences contain situations with fast to slow robot arm as well as camera motion and none or very severe, long-term occlusions.

![]()

For downloading the data set and further details we refer to the github pages.

Object Tracking

The below picture shows each object that is contained in the data set.

![]()

For downloading the data set and further details we refer to the github pages.

Members

Publications