AirCapRL: Autonomous Aerial Human Motion Capture Using Deep Reinforcement Learning

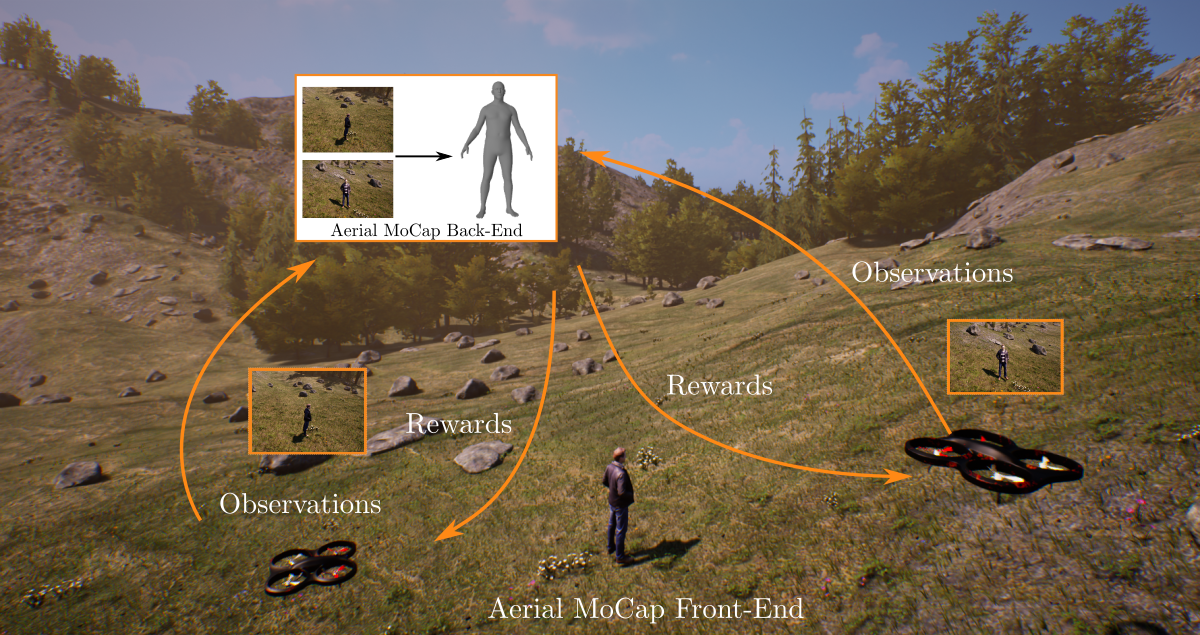

In this letter, we introduce a deep reinforcement learning (DRL) based multi-robot formation controller for the task of autonomous aerial human motion capture (MoCap). We focus on vision-based MoCap, where the objective is to estimate the trajectory of body pose, and shape of a single moving person using multiple micro aerial vehicles. State-of-the-art solutions to this problem are based on classical control methods, which depend on hand-crafted system, and observation models. Such models are difficult to derive, and generalize across different systems. Moreover, the non-linearities, and non-convexities of these models lead to sub-optimal controls. In our work, we formulate this problem as a sequential decision making task to achieve the vision-based motion capture objectives, and solve it using a deep neural network-based RL method. We leverage proximal policy optimization (PPO) to train a stochastic decentralized control policy for formation control. The neural network is trained in a parallelized setup in synthetic environments. We performed extensive simulation experiments to validate our approach. Finally, real-robot experiments demonstrate that our policies generalize to real world conditions.

| Author(s): | Rahul Tallamraju and Nitin Saini and Elia Bonetto and Michael Pabst and Yu Tang Liu and Michael Black and Aamir Ahmad |

| Journal: | IEEE Robotics and Automation Letters |

| Volume: | 5 |

| Number (issue): | 4 |

| Pages: | 6678--6685 |

| Year: | 2020 |

| Month: | October |

| Publisher: | IEEE |

| Project(s): | |

| BibTeX Type: | Article (article) |

| DOI: | 10.1109/LRA.2020.3013906 |

| State: | Published |

| URL: | https://ieeexplore.ieee.org/document/9158379 |

| Digital: | True |

| Electronic Archiving: | grant_archive |

| Note: | Also accepted and presented in the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). |

BibTeX

@article{aircaprl,

title = {AirCapRL: Autonomous Aerial Human Motion Capture Using Deep Reinforcement Learning},

journal = {IEEE Robotics and Automation Letters},

abstract = {In this letter, we introduce a deep reinforcement learning (DRL) based multi-robot formation controller for the task of autonomous aerial human motion capture (MoCap). We focus on vision-based MoCap, where the objective is to estimate the trajectory of body pose, and shape of a single moving person using multiple micro aerial vehicles. State-of-the-art solutions to this problem are based on classical control methods, which depend on hand-crafted system, and observation models. Such models are difficult to derive, and generalize across different systems. Moreover, the non-linearities, and non-convexities of these models lead to sub-optimal controls. In our work, we formulate this problem as a sequential decision making task to achieve the vision-based motion capture objectives, and solve it using a deep neural network-based RL method. We leverage proximal policy optimization (PPO) to train a stochastic decentralized control policy for formation control. The neural network is trained in a parallelized setup in synthetic environments. We performed extensive simulation experiments to validate our approach. Finally, real-robot experiments demonstrate that our policies generalize to real world conditions.},

volume = {5},

number = {4},

pages = {6678--6685},

publisher = {IEEE},

month = oct,

year = {2020},

note = {Also accepted and presented in the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS).},

author = {Tallamraju, Rahul and Saini, Nitin and Bonetto, Elia and Pabst, Michael and Liu, Yu Tang and Black, Michael and Ahmad, Aamir},

doi = {10.1109/LRA.2020.3013906},

url = {https://ieeexplore.ieee.org/document/9158379},

month_numeric = {10}

}