Adaptive Locomotion of Soft Microrobots

Networked Control and Communication

Controller Learning using Bayesian Optimization

Event-based Wireless Control of Cyber-physical Systems

Model-based Reinforcement Learning for PID Control

Learning Probabilistic Dynamics Models

Gaussian Filtering as Variational Inference

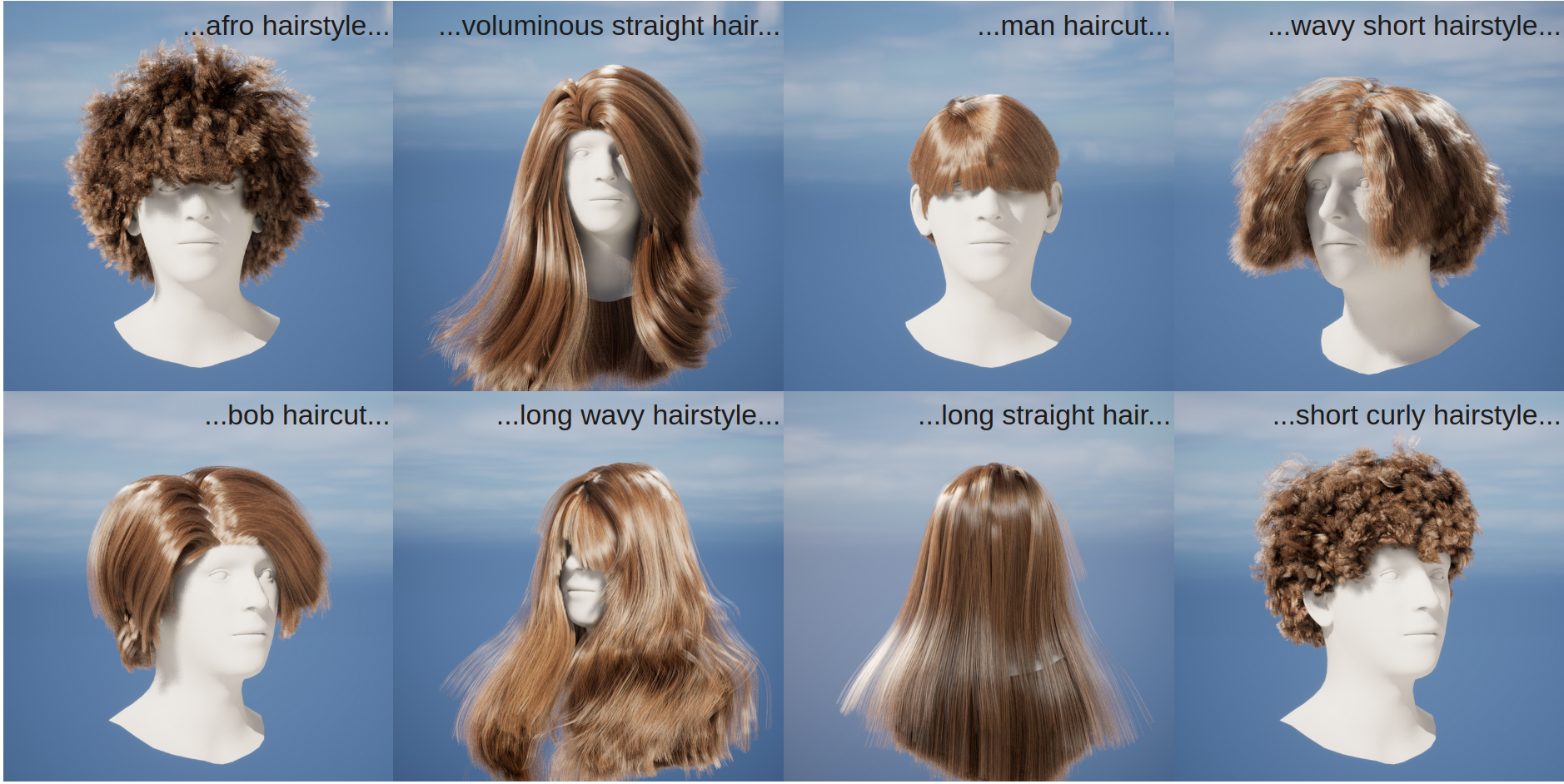

HAAR: Text-Conditioned Generative Model of 3D Strand-based Human Hairstyles

We present HAAR, a new strand-based generative model for 3D human hairstyles. Specifically, based on textual inputs, HAAR produces 3D hairstyles that are ready to be used as assets in various computer graphics animation applications. Current AI-based generative models take advantage of powerful 2D priors to reconstruct 3D content in the form of point clouds, meshes, or volumetric functions. However, by using the 2D priors, they are intrinsically limited to only recovering the visual parts. Highly occluded hair structures can not be reconstructed with those methods, and they only model the outer shell, which is not ready to be used in the physics-based rendering of simulation pipelines. In contrast, we propose a first text-guided generative method that uses 3D hair strands as an underlying representation. Leveraging 2D visual question-answering (VQA) systems, we automatically annotate synthetic hair models that are generated from a small set of artist-created hairstyles. This allows us to train a latent diffusion model that operates in a common hairstyle UV space. In qualitative and quantitative studies, we demonstrate the capabilities of the proposed model and compare it to existing hairstyle generation approaches.

Publications