Database

We released the large-scale robotic grasping database to the research community. It is freely available at http://grasp-database.dkappler.de.

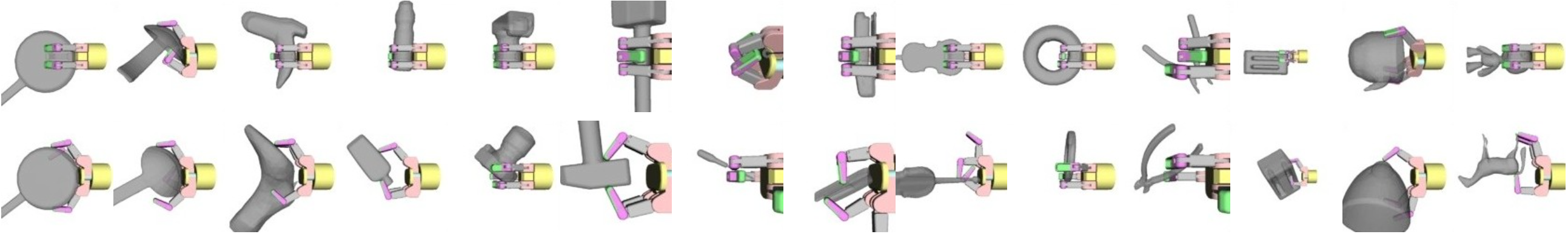

It provides grasps that are applied to more than 700 distinct objects from over 80 categories. These grasps are generated in simulation and evaluated using the standard epsilon-metric and a new physics-metric. In crowdsourcing experiments, we have confirmed that the proposed physics-metric is a more consistent predictor for grasp success than the epsilon-metric.

In total, the database provides around 500.000 labeled grasp each annotated with stability labels from these different metrics. Additionally, we simulate noisy and incomplete perception of objects from different viewpoints using a realistic model of an RGB-D camera. This allows us to additionally link representations of local object shape to each grasp.

This database provides a very interesting dataset for learning how to grasp with techniques that can leverage big data.

Features include:

- Docker Container for easy installation of all dependencies

- Python Interface

- Data Visualization tool

- Efficient HDF5 database format

- Catkin Workspace

- Extendibility (Objects, Hands, Feature Representations)

Members

Publications