SurfaceNet: An End-to-End 3D Neural Network for Multiview Stereopsis

2017

Conference Paper

avg

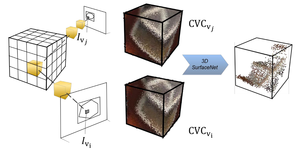

This paper proposes an end-to-end learning framework for multiview stereopsis. We term the network SurfaceNet. It takes a set of images and their corresponding camera parameters as input and directly infers the 3D model. The key advantage of the framework is that both photo-consistency as well geometric relations of the surface structure can be directly learned for the purpose of multiview stereopsis in an end-to-end fashion. SurfaceNet is a fully 3D convolutional network which is achieved by encoding the camera parameters together with the images in a 3D voxel representation. We evaluate SurfaceNet on the large-scale DTU benchmark. Code is available in https://github.com/mjiUST/SurfaceNet

| Author(s): | Ji, Mengqi and Gall, Juergen and Zheng, Haitian and Liu, Yebin and Fang, Lu |

| Book Title: | IEEE International Conference on Computer Vision (ICCV), 2017 |

| Year: | 2017 |

| Publisher: | IEEE Computer Society |

| Department(s): | Autonomes Maschinelles Sehen |

| Bibtex Type: | Conference Paper (inproceedings) |

| Paper Type: | Conference |

| Event Name: | 2017 IEEE International Conference on Computer Vision (ICCV) |

| Event Place: | Venice, Italy |

| URL: | https://github.com/mjiUST/SurfaceNet |

|

BibTex @inproceedings{ji2017surfacenet,

title = {SurfaceNet: An End-to-End 3D Neural Network for Multiview Stereopsis},

author = {Ji, Mengqi and Gall, Juergen and Zheng, Haitian and Liu, Yebin and Fang, Lu},

booktitle = {IEEE International Conference on Computer Vision (ICCV), 2017},

publisher = {IEEE Computer Society},

year = {2017},

doi = {},

url = {https://github.com/mjiUST/SurfaceNet}

}

|

|