2024

hi

Rokhmanova, N., Martus, J., Faulkner, R., Fiene, J., Kuchenbecker, K. J.

GaitGuide: A Wearable Device for Vibrotactile Motion Guidance

Workshop paper (3 pages) presented at the ICRA Workshop on Advancing Wearable Devices and Applications Through Novel Design, Sensing, Actuation, and AI, Yokohama, Japan, May 2024 (misc) Accepted

hi

Javot, B., Nguyen, V. H., Ballardini, G., Kuchenbecker, K. J.

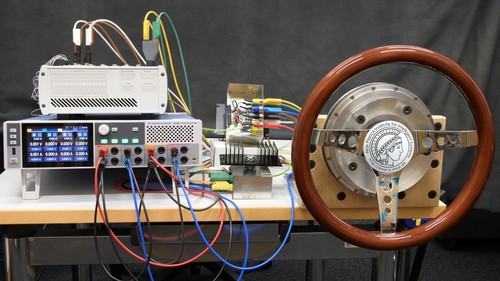

CAPT Motor: A Strong Direct-Drive Rotary Haptic Interface

Hands-on demonstration presented at the IEEE Haptics Symposium, Long Beach, USA, April 2024 (misc)

hi

rm

Sanchez-Tamayo, N., Yoder, Z., Ballardini, G., Rothemund, P., Keplinger, C., Kuchenbecker, K. J.

Cutaneous Electrohydraulic (CUTE) Wearable Devices for Multimodal Haptic Feedback

Extended abstract (1 page) presented at the IEEE RoboSoft Workshop on Multimodal Soft Robots for Multifunctional Manipulation, Locomotion, and Human-Machine Interaction, San Diego, USA, April 2024 (misc)

hi

rm

Sanchez-Tamayo, N., Yoder, Z., Ballardini, G., Rothemund, P., Keplinger, C., Kuchenbecker, K. J.

Cutaneous Electrohydraulic Wearable Devices for Expressive and Salient Haptic Feedback

Hands-on demonstration presented at the IEEE Haptics Symposium, Long Beach, USA, April 2024 (misc)

hi

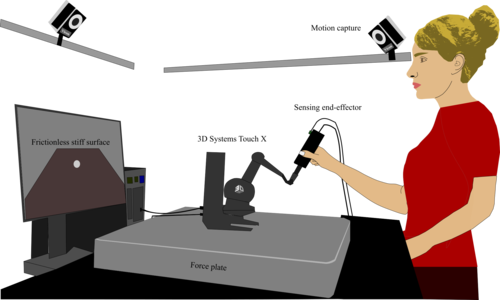

Fazlollahi, F., Seifi, H., Ballardini, G., Taghizadeh, Z., Schulz, A., MacLean, K. E., Kuchenbecker, K. J.

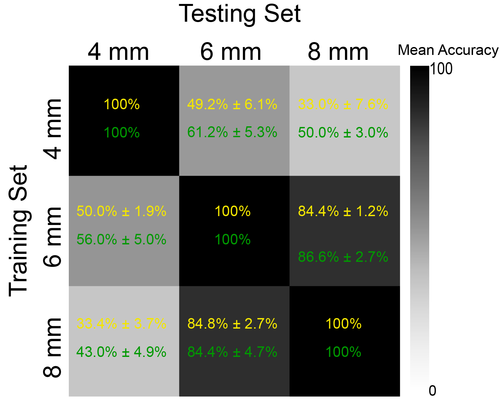

Quantifying Haptic Quality: External Measurements Match Expert Assessments of Stiffness Rendering Across Devices

Work-in-progress paper (2 pages) presented at the IEEE Haptics Symposium, Long Beach, USA, April 2024 (misc)

hi

rm

Sanchez-Tamayo, N., Yoder, Z., Ballardini, G., Rothemund, P., Keplinger, C., Kuchenbecker, K. J.

Demonstration: Cutaneous Electrohydraulic (CUTE) Wearable Devices for Expressive and Salient Haptic Feedback

Hands-on demonstration presented at the IEEE RoboSoft Conference, San Diego, USA, April 2024 (misc)

hi

Schulz, A., Serhat, G., Kuchenbecker, K. J.

Adapting a High-Fidelity Simulation of Human Skin for Comparative Touch Sensing in the Elephant Trunk

Abstract presented at the Society for Integrative and Comparative Biology Annual Meeting (SICB), Seattle, USA, January 2024 (misc)

hi

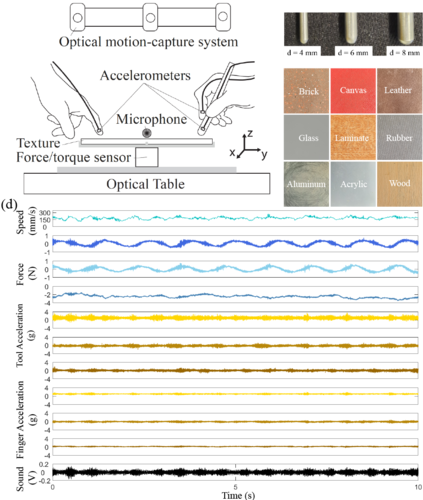

Khojasteh, B., Shao, Y., Kuchenbecker, K. J.

MPI-10: Haptic-Auditory Measurements from Tool-Surface Interactions

Dataset published as a companion to the journal article "Robust Surface Recognition with the Maximum Mean Discrepancy: Degrading Haptic-Auditory Signals through Bandwidth and Noise" in IEEE Transactions on Haptics, January 2024 (misc)

zwe-csfm

hi

Schulz, A., Kaufmann, L., Brecht, M., Richter, G., Kuchenbecker, K. J.

Whiskers That Don’t Whisk: Unique Structure From the Absence of Actuation in Elephant Whiskers

Abstract presented at the Society for Integrative and Comparative Biology Annual Meeting (SICB), Seattle, USA, January 2024 (misc)

hi

Landin, N., Romano, J. M., McMahan, W., Kuchenbecker, K. J.

Discrete Fourier Transform Three-to-One (DFT321): Code

MATLAB code of discrete fourier transform three-to-one (DFT321), 2024 (misc)

2023

hi

Khojasteh, B., Shao, Y., Kuchenbecker, K. J.

Seeking Causal, Invariant, Structures with Kernel Mean Embeddings in Haptic-Auditory Data from Tool-Surface Interaction

Workshop paper (4 pages) presented at the IROS Workshop on Causality for Robotics: Answering the Question of Why, Detroit, USA, October 2023 (misc)

hi

Allemang–Trivalle, A.

Enhancing Surgical Team Collaboration and Situation Awareness through Multimodal Sensing

Proceedings of the ACM International Conference on Multimodal Interaction (ICMI), pages: 716-720, Extended abstract (5 pages) presented at the ACM International Conference on Multimodal Interaction (ICMI) Doctoral Consortium, Paris, France, October 2023 (misc)

hi

Garrofé, G., Schoeffmann, C., Zangl, H., Kuchenbecker, K. J., Lee, H.

NearContact: Accurate Human Detection using Tomographic Proximity and Contact Sensing with Cross-Modal Attention

Extended abstract (4 pages) presented at the International Workshop on Human-Friendly Robotics (HFR), Munich, Germany, September 2023 (misc)

hi

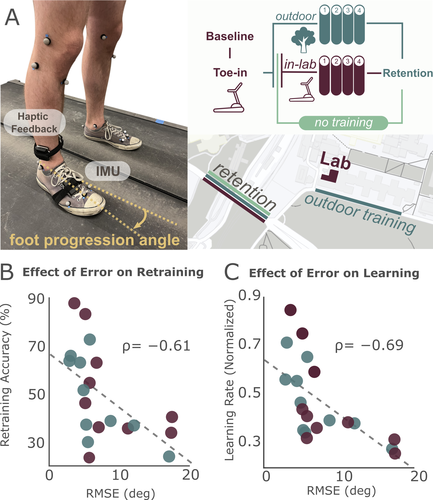

Rokhmanova, N., Pearl, O., Kuchenbecker, K. J., Halilaj, E.

The Role of Kinematics Estimation Accuracy in Learning with Wearable Haptics

Abstract presented at the American Society of Biomechanics (ASB), Knoxville, USA, August 2023 (misc)

hi

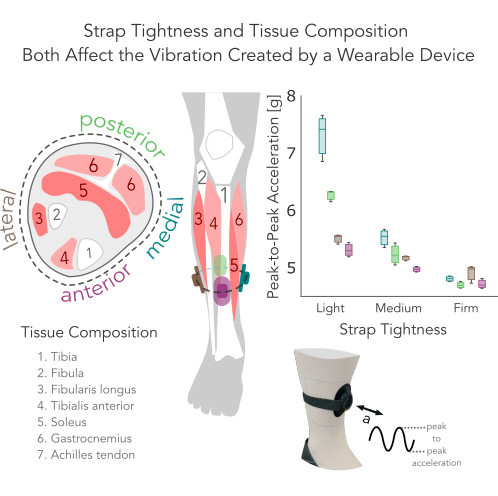

Rokhmanova, N., Faulkner, R., Martus, J., Fiene, J., Kuchenbecker, K. J.

Strap Tightness and Tissue Composition Both Affect the Vibration Created by a Wearable Device

Work-in-progress paper (1 page) presented at the IEEE World Haptics Conference (WHC), Delft, The Netherlands, July 2023 (misc)

hi

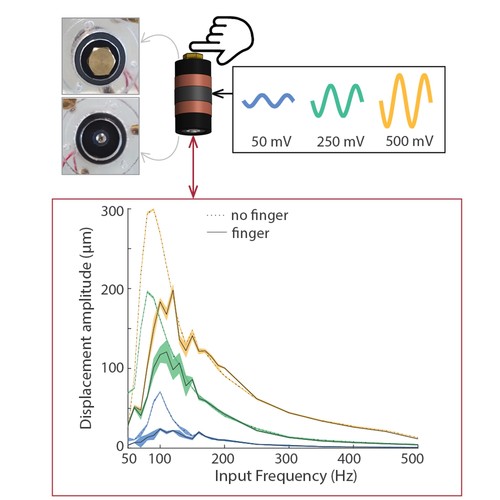

Ballardini, G., Kuchenbecker, K. J.

Toward a Device for Reliable Evaluation of Vibrotactile Perception

Work-in-progress paper (1 page) presented at the IEEE World Haptics Conference (WHC), Delft, The Netherlands, July 2023 (misc)

hi

Khojasteh, B., Solowjow, F., Trimpe, S., Kuchenbecker, K. J.

Multimodal Multi-User Surface Recognition with the Kernel Two-Sample Test: Code

Code published as a companion to the journal article "Multimodal Multi-User Surface Recognition with the Kernel Two-Sample Test" in IEEE Transactions on Automation Science and Engineering, July 2023 (misc)

hi

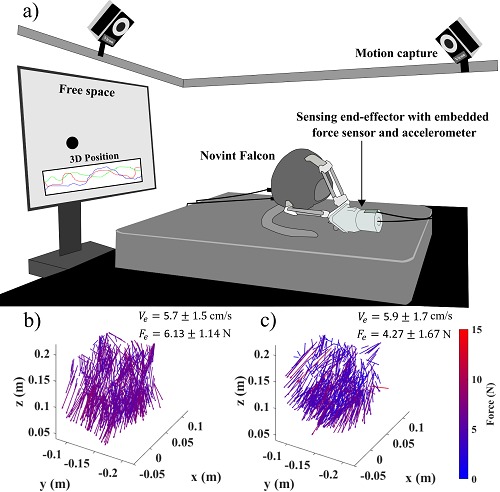

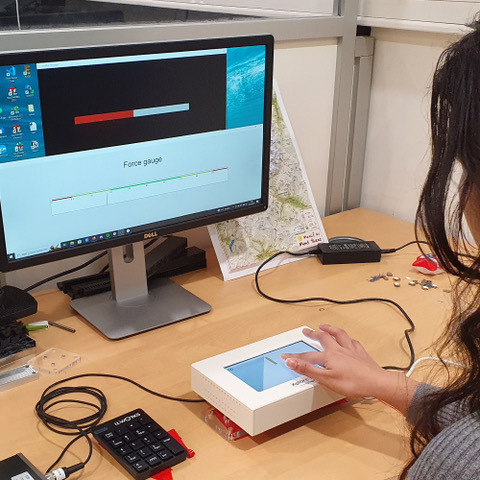

Fazlollahi, F., Taghizadeh, Z., Kuchenbecker, K. J.

Improving Haptic Rendering Quality by Measuring and Compensating for Undesired Forces

Work-in-progress paper (1 page) presented at the IEEE World Haptics Conference (WHC), Delft, The Netherlands, July 2023 (misc)

hi

Khojasteh, B., Shao, Y., Kuchenbecker, K. J.

Capturing Rich Auditory-Haptic Contact Data for Surface Recognition

Work-in-progress paper (1 page) presented at the IEEE World Haptics Conference (WHC), Delft, The Netherlands, July 2023 (misc)

hi

Gong, Y., Javot, B., Lauer, A. P. R., Sawodny, O., Kuchenbecker, K. J.

AiroTouch: Naturalistic Vibrotactile Feedback for Telerobotic Construction

Hands-on demonstration presented at the IEEE World Haptics Conference, Delft, The Netherlands, July 2023 (misc)

hi

Javot, B., Nguyen, V. H., Ballardini, G., Kuchenbecker, K. J.

CAPT Motor: A Strong Direct-Drive Haptic Interface

Hands-on demonstration presented at the IEEE World Haptics Conference, Delft, The Netherlands, July 2023 (misc)

hi

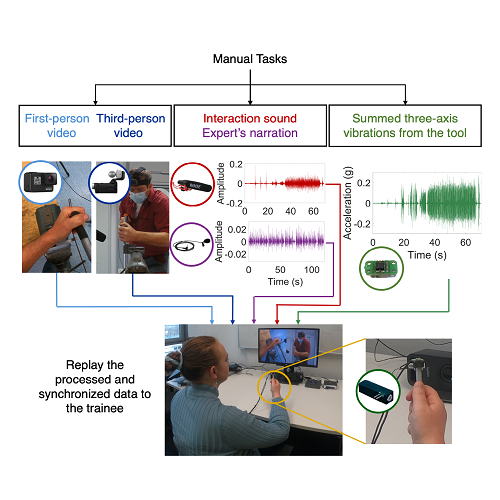

Gourishetti, R., Javot, B., Kuchenbecker, K. J.

Can Recording Expert Demonstrations with Tool Vibrations Facilitate Teaching of Manual Skills?

Work-in-progress paper (1 page) presented at the IEEE World Haptics Conference (WHC), Delft, The Netherlands, July 2023 (misc)

hi

Burns, R. B.

Creating a Haptic Empathetic Robot Animal for Children with Autism

Workshop paper (4 pages) presented at the RSS Pioneers Workshop, Daegu, South Korea, July 2023 (misc)

hi

Gueorguiev, D., Rohou–Claquin, B., Kuchenbecker, K. J.

The Influence of Amplitude and Sharpness on the Perceived Intensity of Isoenergetic Ultrasonic Signals

Work-in-progress paper (1 page) presented at the IEEE World Haptics Conference (WHC), Delft, The Netherlands, July 2023 (misc)

hi

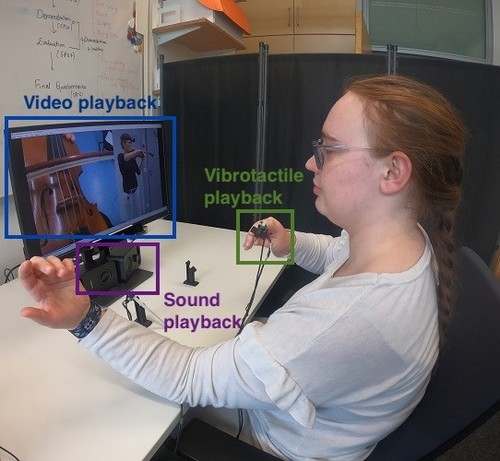

Gourishetti, R., Hughes, A. G., Javot, B., Kuchenbecker, K. J.

Vibrotactile Playback for Teaching Manual Skills from Expert Recordings

Hands-on demonstration presented at the IEEE World Haptics Conference, Delft, The Netherlands, July 2023 (misc)

hi

Gong, Y., Javot, B., Lauer, A. P. R., Sawodny, O., Kuchenbecker, K. J.

Naturalistic Vibrotactile Feedback Could Facilitate Telerobotic Assembly on Construction Sites

Poster presented at the ICRA Workshop on Future of Construction: Robot Perception, Mapping, Navigation, Control in Unstructured and Cluttered Environments, London, UK, May 2023 (misc)

hi

Gong, Y., Tashiro, N., Javot, B., Lauer, A. P. R., Sawodny, O., Kuchenbecker, K. J.

AiroTouch: Naturalistic Vibrotactile Feedback for Telerobotic Construction-Related Tasks

Extended abstract (1 page) presented at the ICRA Workshop on Communicating Robot Learning across Human-Robot Interaction, London, UK, May 2023 (misc)

hi

Caccianiga, G., Nubert, J., Hutter, M., Kuchenbecker., K. J.

3D Reconstruction for Minimally Invasive Surgery: Lidar Versus Learning-Based Stereo Matching

Workshop paper (2 pages) presented at the ICRA Workshop on Robot-Assisted Medical Imaging, London, UK, May 2023 (misc)

hi

Khojasteh, B., Shao, Y., Kuchenbecker, K. J.

Surface Perception through Haptic-Auditory Contact Data

Workshop paper (4 pages) presented at the ICRA Workshop on Embracing Contacts, London, UK, May 2023 (misc)

hi

Mohan, M., Kuchenbecker, K. J.

OCRA: An Optimization-Based Customizable Retargeting Algorithm for Teleoperation

Workshop paper (3 pages) presented at the ICRA Workshop Toward Robot Avatars, London, UK, May 2023 (misc)

hi

Rokhmanova, N.

Wearable Biofeedback for Knee Joint Health

Extended abstract (5 pages) presented at the ACM SIGCHI Conference on Human Factors in Computing Systems (CHI) Doctoral Consortium, Hamburg, Germany, April 2023 (misc)

hi

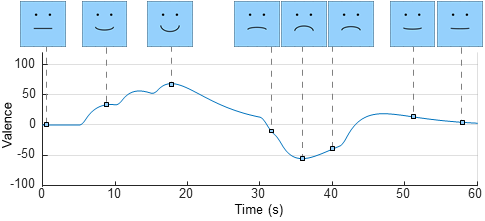

Burns, R. B., Kuchenbecker, K. J.

A Lasting Impact: Using Second-Order Dynamics to Customize the Continuous Emotional Expression of a Social Robot

Workshop paper (5 pages) presented at the HRI Workshop on Lifelong Learning and Personalization in Long-Term Human-Robot Interaction (LEAP-HRI), Stockholm, Sweden, March 2023 (misc)

ei

Bottou, L., Schölkopf, B.

Borges und die Künstliche Intelligenz

2023, published in Frankfurter Allgemeine Zeitung, 18 December 2023, Nr. 294 (misc)

2022

al

hi

Richardson, B. A., Kuchenbecker, K. J., Martius, G.

A Sequential Group VAE for Robot Learning of Haptic Representations

pages: 1-11, Workshop paper (8 pages) presented at the CoRL Workshop on Aligning Robot Representations with Humans, Auckland, New Zealand, December 2022 (misc)

hi

L’Orsa, R., Lotbiniere-Bassett, M. D., Zareinia, K., Lama, S., Westwick, D., Sutherland, G., Kuchenbecker, K. J.

Semi-Automated Robotic Pleural Cavity Access in Space

Poster presented at the Canadian Space Health Research Symposium (CSHRS), Alberta, Canada, November 2022 (misc)

hi

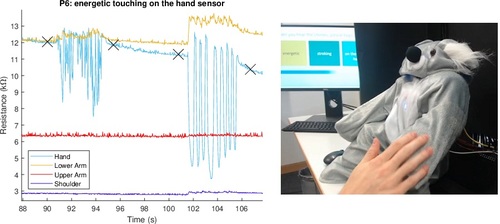

Burns, R. B., Rosenthal, R., Garg, K., Kuchenbecker, K. J.

Do-It-Yourself Whole-Body Social-Touch Perception for a NAO Robot

Workshop paper (1 page) presented at the IROS Workshop on Large-Scale Robotic Skin: Perception, Interaction and Control, Kyoto, Japan, October 2022 (misc)

al

hi

Andrussow, I., Sun, H., Kuchenbecker, K. J., Martius, G.

A Soft Vision-Based Tactile Sensor for Robotic Fingertip Manipulation

Workshop paper (1 page) presented at the IROS Workshop on Large-Scale Robotic Skin: Perception, Interaction and Control, Kyoto, Japan, October 2022 (misc)

hi

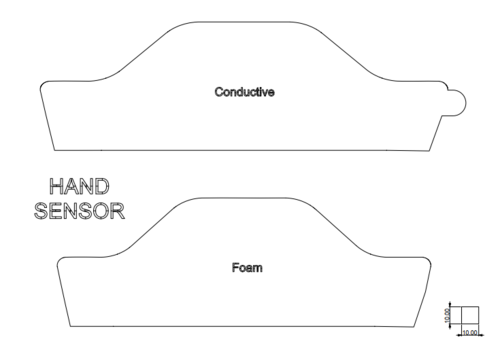

Burns, R. B., Lee, H., Seifi, H., Faulkner, R., Kuchenbecker, K. J.

Sensor Patterns Dataset for Endowing a NAO Robot with Practical Social-Touch Perception

Dataset published as a companion to the journal article "Endowing a NAO Robot with Practical Social-Touch Perception" in Frontiers in Robotics and AI, October 2022 (misc)

hi

Rokhmanova, N., Kuchenbecker, K. J., Shull, P. B., Ferber, R., Halilaj, E.

Predicting Knee Adduction Moment Response to Gait Retraining

Extended abstract presented at North American Congress of Biomechanics (NACOB), Ottawa, Canada, August 2022 (misc)

dlg

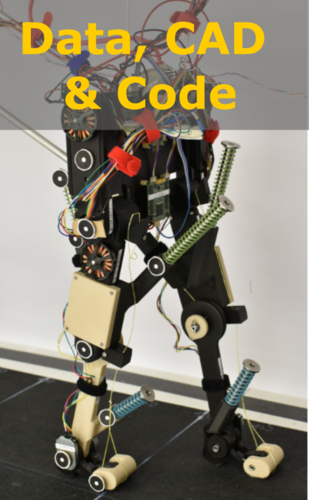

Kiss, B., Gonen, E. C., Mo, A., Buchmann, A., Renjewski, D., Badri-Spröwitz, A.

Data of: Gastrocnemius and Power Amplifier Soleus Spring-Tendons Achieve Fast Human-like Walking in a Bipedal Robot

July 2022 (misc)

hi

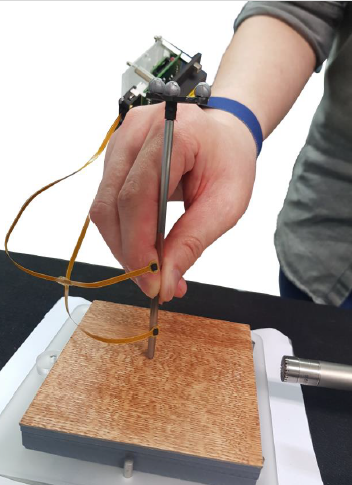

L’Orsa, R., Zareinia, K., Westwick, D., Sutherland, G., Kuchenbecker, K. J.

A Sensorized Needle-Insertion Device for Characterizing Percutaneous Thoracic Tool-Tissue Interactions

Short paper (2 pages) presented at the Hamlyn Symposium on Medical Robotics (HSMR), London, UK, June 2022 (misc)

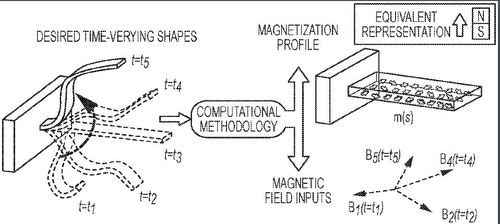

pi

Lum, G. Z., Ye, Z., Sitti, M.

Method of fabricating a shape-changeable magnetic member, method of producing a shape changeable magnetic member and shape changeable magnetic member

June 2022, US Patent 11,373,791 (misc)

hi

Caccianiga, G., Kuchenbecker, K. J.

Dense 3D Reconstruction Through Lidar: A New Perspective on Computer-Integrated Surgery

Short paper (2 pages) presented at the Hamlyn Symposium on Medical Robotics (HSMR), London, UK, June 2022 (misc)

hi

Fazlollahi, F., Kuchenbecker, K. J.

Comparing Two Grounded Force-Feedback Haptic Devices

Hands-on demonstration presented at EuroHaptics, Hamburg, Germany, May 2022 (misc)

hi

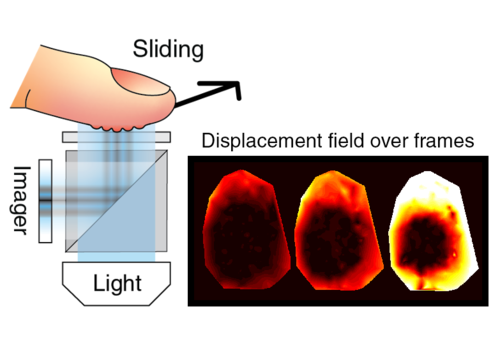

Nam, S., Gueorguiev, D., Kuchenbecker, K. J.

Finger Contact during Pressing and Sliding on a Glass Plate

Poster presented at the EuroHaptics Workshop on Skin Mechanics and its Role in Manipulation and Perception, Hamburg, Germany, May 2022 (misc)

hi

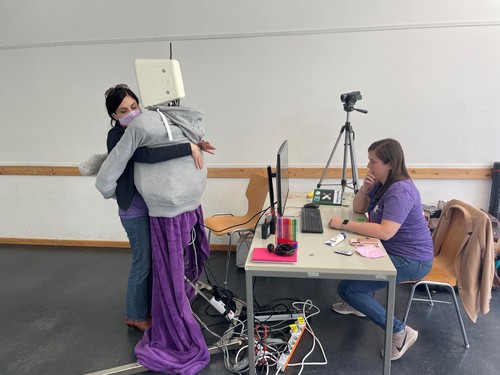

Block, A. E., Seifi, H., Christen, S., Javot, B., Kuchenbecker, K. J.

HuggieBot: A Human-Sized Haptic Interface

Hands-on demonstration presented at EuroHaptics, Hamburg, Germany, May 2022, Award for best hands-on demonstration (misc)

hi

Burns, R. B., Lee, H., Seifi, H., Faulkner, R., Kuchenbecker, K. J.

User Study Dataset for Endowing a NAO Robot with Practical Social-Touch Perception

Dataset published as a companion to the journal article "Endowing a NAO Robot with Practical Social-Touch Perception" in Frontiers in Robotics and AI, April 2022 (misc)

ei

Wang, H., Jin, Z., Cao, J., Fung, G. P. C., Wong, K.

Inconsistent Few-Shot Relation Classification via Cross-Attentional Prototype Networks with Contrastive Learning

2022 (misc)

2021

hi

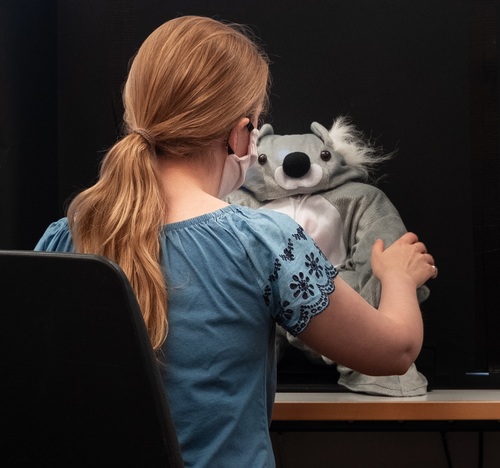

Burns, R. B.

Teaching Safe Social Touch Interactions Using a Robot Koala

Workshop paper (1 page) presented at the IROS Workshop on Proximity Perception in Robotics: Increasing Safety for Human-Robot Interaction Using Tactile and Proximity Perception, Prague, Czech Republic, September 2021 (misc)